AI Data Center Power Consumption Market: The Energy Engine Behind the AI Revolution

Introduction: When Intelligence Meets Energy Demand

Artificial intelligence is reshaping the global economy—but powering this transformation requires massive amounts of electricity. From training large language models to running real-time AI applications, data centers are becoming the energy backbone of the digital world.

As AI adoption accelerates across industries, the demand for high-performance computing infrastructure is pushing energy consumption to unprecedented levels. This shift has given rise to the AI data center power consumption market, a rapidly growing sector focused on managing, optimizing, and sustaining energy usage in AI-driven environments.

Market Overview: Explosive Growth Driven by AI Expansion

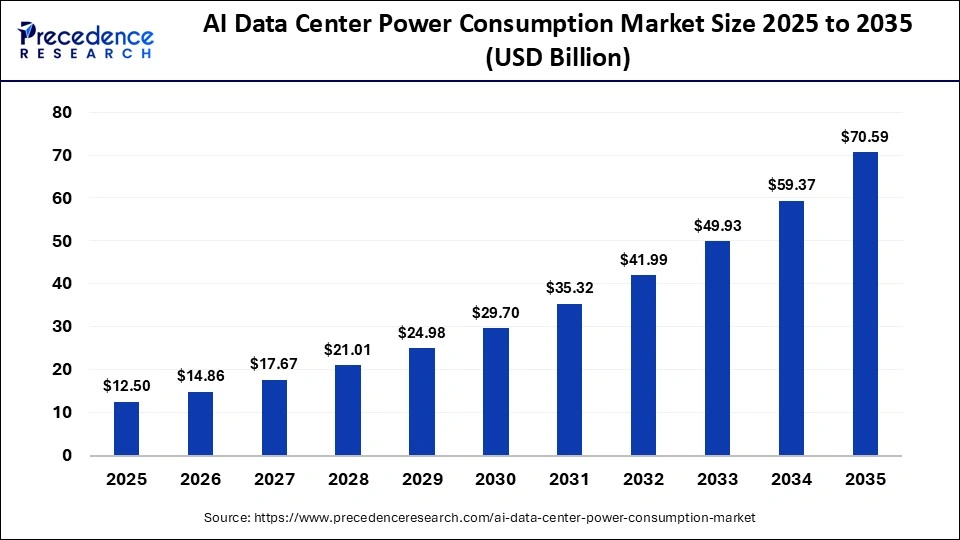

The global AI data center power consumption market was valued at USD 12.50 billion in 2025 and is expected to grow from USD 14.86 billion in 2026 to USD 70.59 billion by 2035, expanding at a CAGR of 18.90%.

This growth is fueled by:

- Rapid expansion of generative AI models

- Increasing deployment of GPU-intensive workloads

- Growth of hyperscale and cloud data centers

- Rising need for real-time AI processing

AI workloads require significantly more power than traditional computing, making energy consumption a central challenge for the industry.

Read Also: Fiber Optic Cable Market

Why AI Data Centers Consume So Much Power

AI systems are fundamentally more resource-intensive than traditional IT workloads. Training and deploying AI models involve:

- Processing massive datasets continuously

- Running computations across thousands of GPUs

- Maintaining high-speed networking and storage systems

Core Power Consumption Areas

- Compute (GPUs, TPUs, CPUs)

AI accelerators consume significantly more energy than standard processors. - Cooling Infrastructure

High-density hardware generates extreme heat, requiring advanced cooling solutions. - Power Distribution Systems

Includes UPS systems, PDUs, and transformers for efficient power delivery. - Networking & Storage

High-speed data transfer and storage operations add to energy demand.

Key Market Trends

1. Surge in Generative AI and Large Language Models

Generative AI is one of the biggest drivers of power demand. Training large-scale models requires enormous computational resources, often consuming megawatt-hours of electricity per model.

Research shows that AI supercomputers can require hundreds of megawatts of power, equivalent to the energy consumption of hundreds of thousands of households.

2. Cooling Systems Becoming the Dominant Energy Segment

Cooling systems accounted for 30% of the market share in 2025 and are expected to grow at the fastest rate.

Emerging Cooling Technologies:

- Liquid cooling

- Immersion cooling

- Hybrid cooling systems

These technologies are essential to maintain performance and prevent overheating in dense AI clusters.

3. Integration of Renewable Energy

To address environmental concerns and rising energy costs, data centers are increasingly adopting:

- Solar and wind energy

- Hydropower

- Energy storage systems

Sustainability is becoming a key competitive factor, with companies investing in green data centers.

4. Expansion of Hyperscale Data Centers

Hyperscale data centers dominated the market with a 45% share in 2025.

These facilities are designed to handle massive AI workloads, making them the primary contributors to global data center power consumption.

5. AI-Driven Power Optimization

AI is not only consuming power—it is also helping optimize it. Advanced software solutions are being used to:

- Monitor energy usage in real time

- Predict system failures

- Optimize cooling and workload distribution

Market Dynamics

Key Drivers

1. Rapid Growth of AI Applications

AI adoption across industries such as healthcare, finance, retail, and manufacturing is driving demand for high-performance computing.

2. Need for Real-Time Processing

Applications like autonomous systems and fraud detection require continuous, high-speed processing.

3. Cloud and Edge Computing Expansion

Cloud providers and edge infrastructure are scaling rapidly, increasing overall energy consumption.

Challenges

1. Power Infrastructure Limitations

Existing power grids are struggling to meet the rising demand from AI data centers.

2. High Operational Costs

Electricity is one of the largest expenses for data center operators.

3. Environmental Impact

AI-driven energy demand is raising concerns about carbon emissions and sustainability.

Recent reports suggest AI could consume up to 4.4% of global electricity by 2035, highlighting its growing environmental footprint.

Opportunities

1. Energy-Efficient AI Hardware

Development of low-power chips and optimized processors can reduce energy consumption.

2. Smart Energy Management Systems

AI-driven solutions can significantly improve efficiency and reduce waste.

3. Renewable-Powered Data Centers

Adoption of clean energy presents long-term growth opportunities.

Segment Analysis

By Component

- Cooling Systems – 30% (Largest Segment)

- UPS Systems – 25%

- Power Distribution Units – 20%

- Power Monitoring Software – 15%

- Backup Generators & Storage – 10%

By Data Center Type

- Hyperscale Data Centers – 45% (Dominant)

- Colocation Data Centers – 25%

- Enterprise Data Centers – 20%

- Edge Data Centers – 10%

By Application

- AI Training Workloads – 35% (Largest)

- AI Inference Workloads – 25%

- Generative AI & LLMs – Fastest Growing

- High-Density GPU Clusters

- Edge AI Processing

By End-Use Industry

- IT & Telecommunications – 35% (Leading Segment)

- BFSI – 15%

- Healthcare – Fastest Growing

- Retail & E-commerce

- Government & Defense

- Manufacturing

Regional Insights

North America (Dominant Region)

- Strong presence of hyperscale data centers

- Advanced AI ecosystem

- High investment in cloud infrastructure

Asia Pacific (Fastest Growing Region)

- Rapid digital transformation

- Increasing AI adoption

- Expanding cloud infrastructure

Europe

- Focus on sustainability and energy efficiency

- Strict environmental regulations

Future Outlook: Balancing Innovation and Sustainability

The future of the AI data center power consumption market will be shaped by:

- Energy-efficient AI chips and processors

- Next-generation cooling technologies

- Smart grids and decentralized energy systems

- Increased use of renewable energy

At the same time, energy demand is expected to surge dramatically. Some projections suggest global data center electricity consumption could more than double by 2030, driven largely by AI workloads.

Conclusion

The AI revolution is fundamentally an energy revolution. As organizations continue to scale AI capabilities, the demand for electricity will become one of the most critical challenges—and opportunities—of the digital age.

With the market projected to reach USD 70.59 billion by 2035, the AI data center power consumption industry will play a pivotal role in shaping the future of technology, sustainability, and global infrastructure.

The companies that succeed will be those that can balance performance with efficiency, ensuring that the growth of AI remains both scalable and sustainable.

Get a Sample PDF: https://www.precedenceresearch.com/sample/8318

For inquiries regarding discounts, bulk purchases, or customization requests, please contact us at sales@precedenceresearch.com