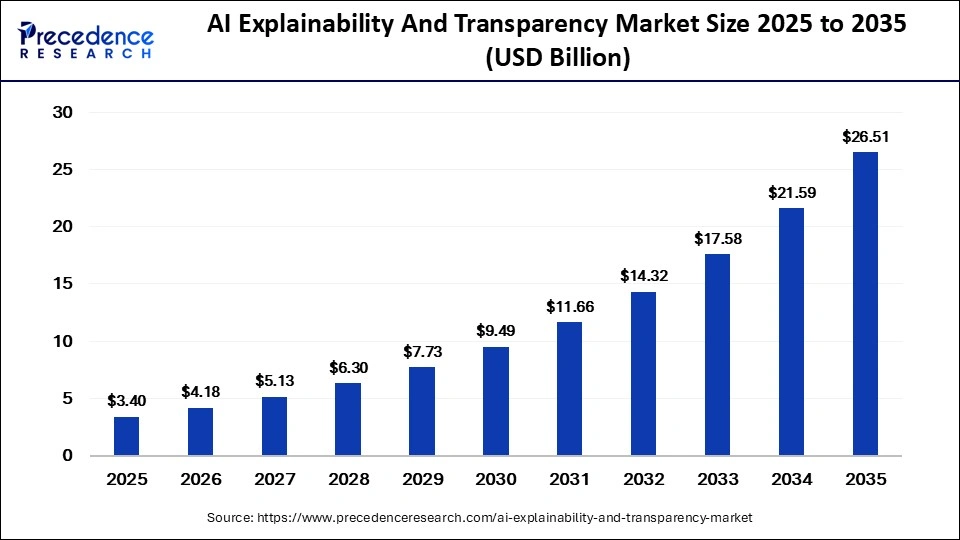

The global AI explainability and transparency market is projected to reach USD 26.51 billion by 2035, driven by regulatory pressure, ethical AI adoption, generative AI governance, and increasing enterprise demand for trustworthy artificial intelligence systems.

AI Explainability and Transparency Market Overview

The global AI explainability and transparency market is witnessing substantial growth as organizations increasingly focus on building ethical, accountable, and trustworthy artificial intelligence systems. According to Precedence Research, the market size was valued at USD 3.40 billion in 2025 and is expected to grow from USD 4.18 billion in 2026 to approximately USD 26.51 billion by 2035, registering a CAGR of 22.80% during the forecast period.

AI explainability and transparency technologies are becoming essential for enterprises deploying AI-driven decision-making systems across industries such as banking, healthcare, retail, manufacturing, telecommunications, government, and cybersecurity. Organizations increasingly require AI systems capable of explaining how outputs, predictions, and recommendations are generated.

The growing adoption of generative AI, autonomous systems, machine learning models, and predictive analytics has intensified concerns regarding “black-box” AI systems that operate without clear visibility into their decision-making processes. As a result, enterprises and regulators are investing heavily in explainable AI (XAI), governance frameworks, bias detection systems, and transparency solutions.

Read Also: Low Code AI Platform Market

Understanding AI Explainability and Transparency

AI explainability refers to the ability of artificial intelligence systems to provide understandable explanations regarding how decisions or predictions are made. Transparency involves making AI systems more visible, traceable, and accountable to users, regulators, and organizations.

These technologies help businesses:

- Understand AI-generated decisions

- Improve trust in AI systems

- Detect and reduce algorithmic bias

- Ensure compliance with regulations

- Monitor AI behavior continuously

- Improve model accountability

- Validate automated recommendations

Explainable AI solutions are particularly important in high-risk industries where automated decisions directly impact individuals, including healthcare diagnostics, loan approvals, fraud detection, insurance underwriting, and hiring systems.

Key Market Drivers

Rising Demand for Ethical and Responsible AI

One of the primary factors driving the AI explainability and transparency market is the increasing global demand for ethical AI systems.

Organizations deploying AI technologies now face growing pressure from regulators, customers, and stakeholders to ensure fairness, accountability, and transparency in automated decision-making.

Businesses increasingly require tools capable of explaining how AI models generate predictions and recommendations, especially in critical applications such as:

- Credit scoring

- Fraud detection

- Recruitment

- Healthcare diagnostics

- Insurance risk assessment

- Cybersecurity monitoring

Explainability technologies help organizations strengthen customer trust while minimizing operational and reputational risks.

The growing adoption of autonomous AI agents and generative AI systems is further intensifying the need for explainable and auditable AI infrastructure.

Increasing Regulatory Pressure Worldwide

Governments and regulatory bodies worldwide are implementing stricter AI governance and compliance requirements.

Regulations such as the European Union AI Act and GDPR are accelerating enterprise investments in transparency and explainability solutions. Organizations increasingly require systems capable of supporting:

- Audit trails

- Bias detection

- Risk monitoring

- Model documentation

- Compliance reporting

- Decision traceability

Regulatory scrutiny surrounding AI fairness, privacy, and accountability is expected to remain one of the strongest long-term growth drivers for the market.

Rapid Expansion of Generative AI

The explosive growth of generative AI technologies is significantly boosting demand for explainability solutions.

Large language models (LLMs), AI copilots, and autonomous agents are increasingly integrated into enterprise workflows, creating new challenges related to hallucinations, misinformation, and decision accountability.

Organizations are implementing explainability layers such as:

- Confidence scoring

- Source attribution

- Model interpretability tools

- AI monitoring systems

- Human oversight frameworks

These technologies help enterprises improve governance and maintain trust in AI-generated outputs.

Rising Adoption Across BFSI and Healthcare

The BFSI sector accounted for approximately 30% of the market share in 2025, making it the leading end-use industry.

Financial institutions increasingly deploy explainability tools to improve transparency in:

- Fraud detection

- Credit approvals

- Risk management

- Regulatory compliance

- Customer verification systems

Healthcare organizations are also rapidly adopting explainable AI solutions to support transparent diagnostics, treatment recommendations, and patient monitoring systems.

The ability to explain AI-generated medical decisions is becoming essential for improving clinician confidence and patient safety.

Market Restraints

Complexity of Interpreting Advanced AI Models

One of the major challenges in the market is the technical complexity involved in interpreting advanced neural networks and deep learning systems.

Highly sophisticated AI models often function as “black boxes,” making it difficult to fully explain how outputs are generated. Balancing model accuracy with explainability remains a major technical challenge for enterprises and developers.

Lack of Standardized Explainability Frameworks

The absence of universal explainability standards creates implementation challenges across industries.

Different organizations and regulators frequently define explainability, transparency, interpretability, and traceability differently, resulting in inconsistent governance practices and operational uncertainty.

This lack of standardization may slow enterprise-scale adoption in highly regulated industries.

Integration Challenges with Existing Systems

Many organizations struggle to integrate explainability tools with existing enterprise AI infrastructure and legacy analytics systems.

Complex AI environments often require customized governance frameworks capable of supporting multiple models, data pipelines, and compliance requirements simultaneously.

Emerging Opportunities

Growth of Responsible AI Governance Platforms

The emergence of enterprise-wide responsible AI governance ecosystems is creating substantial opportunities for explainability solution providers.

Organizations increasingly establish dedicated responsible AI teams focused on:

- Fairness monitoring

- Transparency management

- Compliance oversight

- AI risk assessment

- Governance automation

Explainability solutions are becoming critical components of enterprise AI lifecycle management systems.

Rising Demand for Bias Detection and Fairness Tools

Bias detection and fairness monitoring represent one of the fastest-growing market segments.

The bias detection and fairness tools segment accounted for approximately 22% of the market share in 2025 and is projected to grow at a CAGR of 25.5% through 2035.

Growing concerns surrounding discriminatory outcomes in AI-powered hiring, lending, healthcare, and insurance applications are accelerating global demand for fairness-focused AI governance solutions.

Expansion of Explainable AI in Cybersecurity

Cybersecurity is emerging as a significant application area for explainable AI technologies.

Transparent AI models help security teams:

- Validate threat intelligence

- Reduce false positives

- Improve trust in automated security systems

- Strengthen compliance monitoring

- Enhance incident response transparency

Financial institutions and enterprise IT organizations increasingly prioritize explainability within cybersecurity operations.

Segment Analysis

Software Segment Dominates the Market

By component, the software segment accounted for nearly 70% of the market share in 2025 due to growing adoption of AI governance platforms, interpretability software, and automated monitoring systems.

Organizations increasingly require software solutions capable of delivering:

- Real-time model monitoring

- Audit trails

- Bias detection

- Explainability dashboards

- Governance automation

- Compliance management

The services segment is also witnessing steady growth as enterprises seek consulting and implementation support for responsible AI strategies.

Cloud Deployment Leads the Market

Cloud-based deployment dominated the market with approximately 75% share in 2025 due to scalability, flexibility, and lower infrastructure costs.

Cloud-native explainability platforms enable enterprises to integrate governance systems into AI workflows more efficiently while supporting centralized monitoring and real-time analytics.

Model Interpretability Tools Hold Largest Share

The model interpretability tools segment led the market with approximately 28% share in 2025.

These solutions help organizations understand feature importance, decision logic, model behavior, and prediction pathways.

Meanwhile, model monitoring and auditing systems are rapidly gaining traction as enterprises seek continuous oversight of AI systems.

Regional Analysis

North America Dominates the Global Market

North America accounted for approximately 44% of the global market share in 2025 due to advanced AI infrastructure, strong enterprise adoption, and significant investments in responsible AI initiatives.

The United States remains the dominant regional market, supported by increasing deployment of explainable AI solutions across financial services, healthcare, cybersecurity, and enterprise automation sectors.

The U.S. AI explainability and transparency market is projected to reach nearly USD 8.91 billion by 2035.

Asia-Pacific Expected to Witness Fastest Growth

Asia-Pacific is projected to grow at the fastest CAGR of 26.5% during the forecast period.

Rapid digital transformation, rising AI adoption, government-backed AI initiatives, and increasing focus on ethical AI governance are driving regional expansion.

Countries such as India, China, Japan, and South Korea are becoming major hubs for responsible AI development and governance innovation.

Europe Maintains Strong Growth Momentum

Europe continues to maintain a strong market position due to strict regulatory frameworks surrounding AI governance and data privacy.

The European Union AI Act and GDPR regulations are significantly accelerating enterprise investments in explainability and transparency solutions across banking, healthcare, insurance, and government sectors.

Competitive Landscape

The AI explainability and transparency market is highly competitive, with technology companies, consulting firms, and enterprise software providers investing heavily in responsible AI technologies.

Key Companies Operating in the Market

Major market players include:

- IBM

- Microsoft

- Google Cloud

- Amazon Web Services

- Oracle

- Salesforce

- Accenture

- Deloitte

- Infosys

- Tata Consultancy Services (TCS)

Get a Sample Copy: https://www.precedenceresearch.com/sample/8405

For inquiries regarding discounts, bulk purchases, or customization requests, please contact us at sales@precedenceresearch.com